Sony rarely puts the spotlight on semiconductor technology unless it wants the industry to notice. That is ׳what this case study indicates. By actively showcasing a new image stabilization chip, Sony is signaling a deeper shift in how future cameras will handle motion, long before filmmakers see it listed on a spec sheet.

What Sony is actually showing

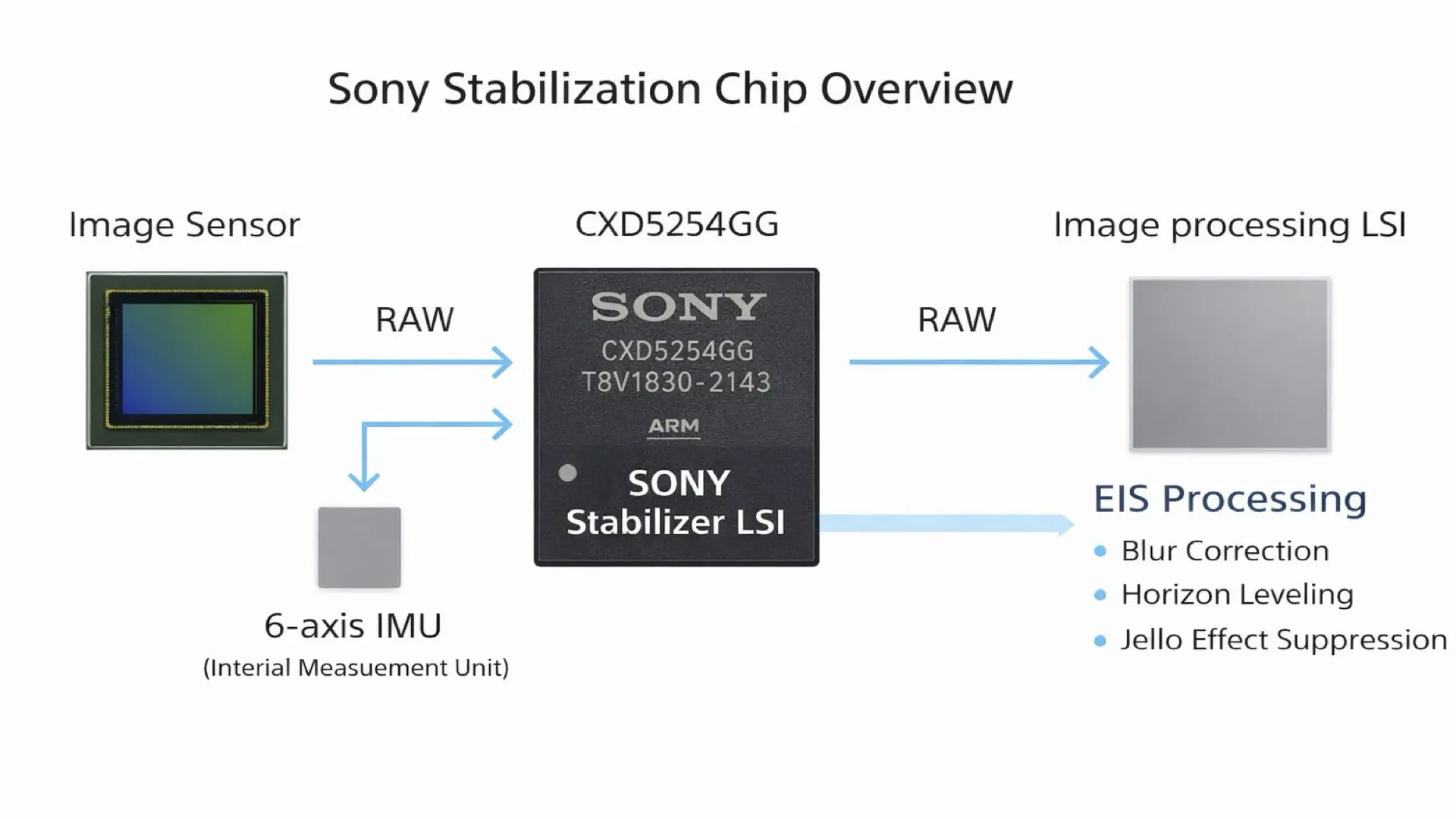

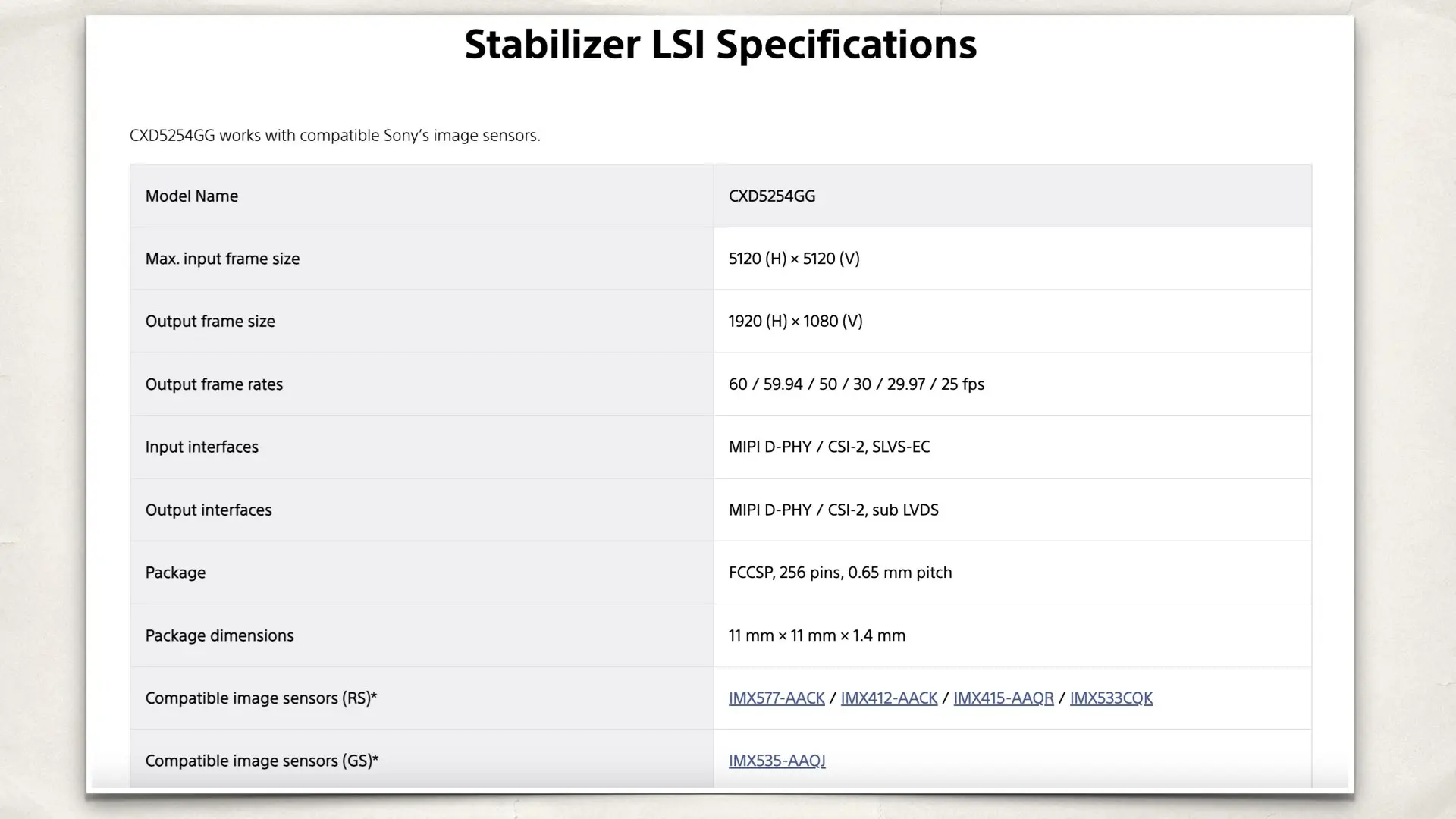

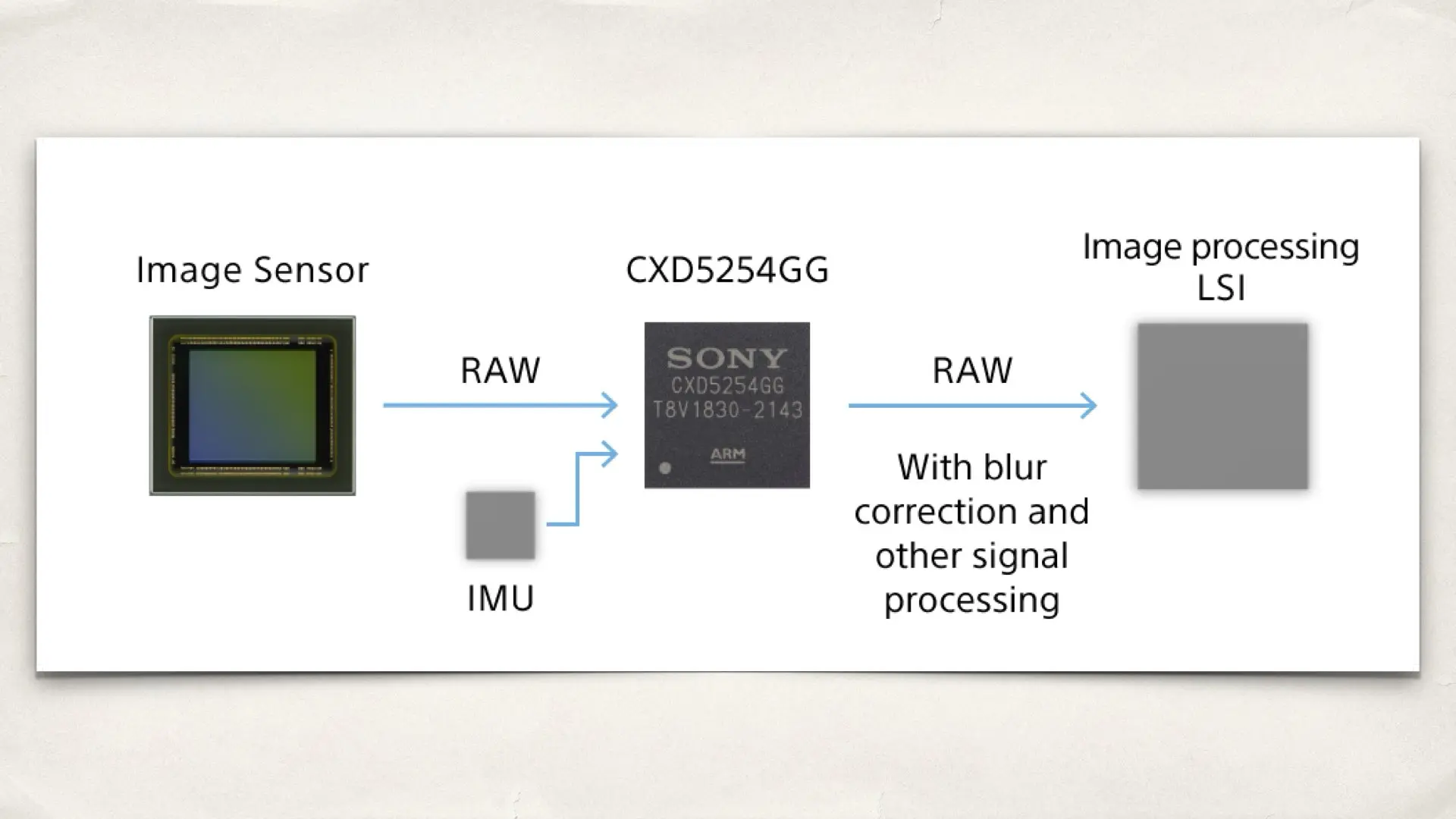

At the center of the showcase is a dedicated image stabilization LSI developed by Sony Semiconductor Solutions. Unlike traditional electronic stabilization, this chip operates extremely close to the image sensor. Instead of correcting motion after the image has already been processed, it stabilizes the signal as it is being captured. Live image data is combined with precise motion information from a 6-axis inertial measurement unit, allowing the system to correct shake, rotation, and horizon drift in real time. The most important technical implications for video work are simple: Stabilization happens on raw or near raw image data. Latency is kept to roughly 1.5 frames. Correction takes place before compression and color processing. For filmmakers, this means fewer visible artifacts. Less warping at the edges. More natural motion during fast pans or handheld shooting.

Why showcasing matters more than the chip itself

Sony has many components in its catalog that never receive public attention. Showcasing is different. It suggests readiness, confidence, and intent. By demonstrating this stabilization chip, Sony is effectively inviting manufacturers to design systems around it. This moves the technology from quiet availability to active adoption. For the imaging industry, that shift often precedes real products by a few years. In other words, this is not about what you can buy today. It is about what Sony expects cameras to look like tomorrow.

Why hardware-level stabilization is becoming essential

As sensors continue to increase in resolution and readout speed, traditional electronic stabilization faces growing limitations. Cropping becomes more destructive. Software correction becomes easier to spot, especially in high resolution footage. Dedicated stabilization hardware solves this problem by acting earlier, when the image still has full integrity. It also reduces the computational load on the main image processor, which is increasingly busy with autofocus, noise reduction, and color science. This approach is particularly relevant for: Action heavy filming, handheld telephoto work, vehicle-mounted and robotic cameras, and live production where latency cannot be tolerated. These are exactly the scenarios where filmmakers notice stabilization failures first.

Where this technology is likely to appear first

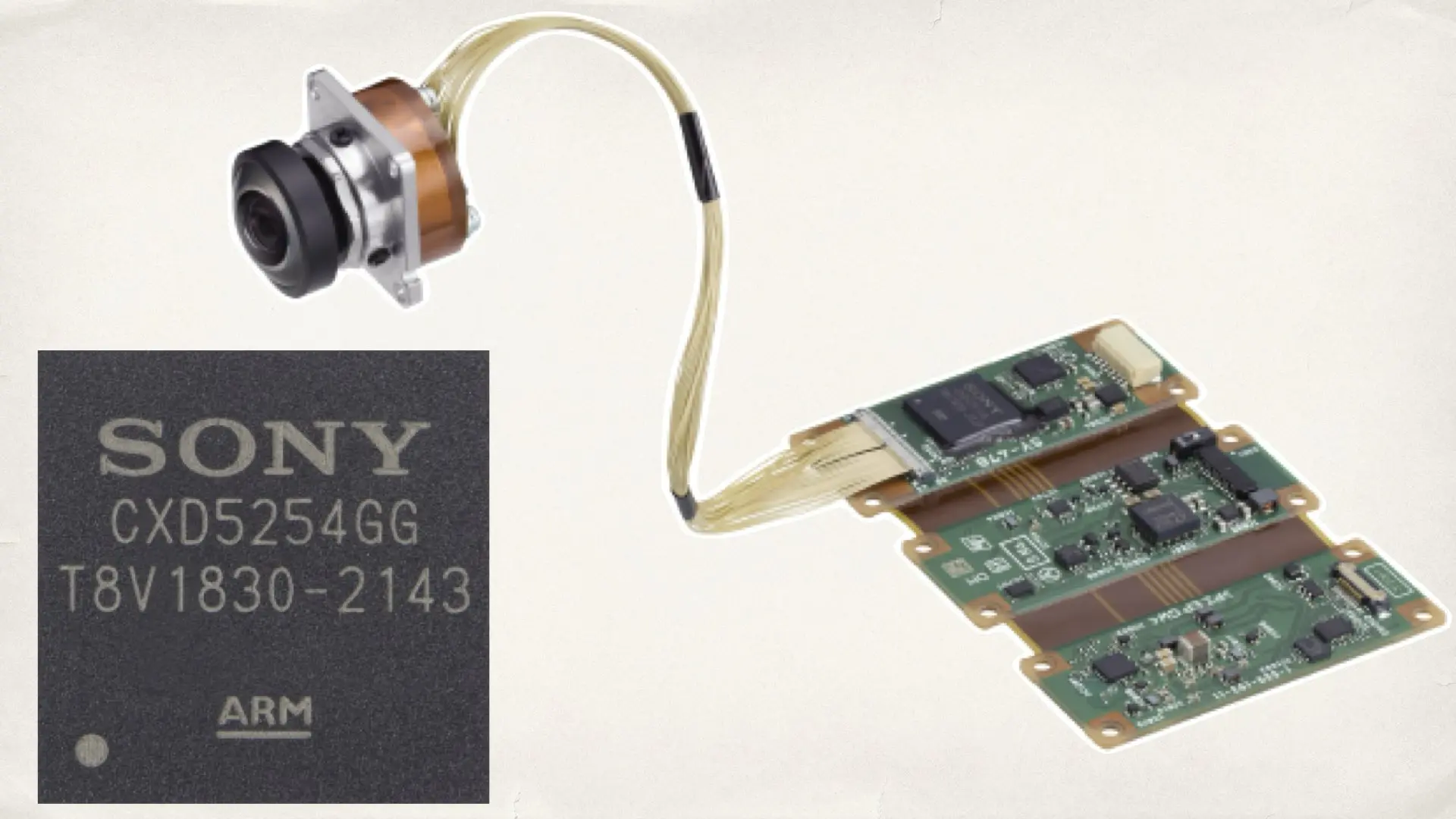

Sony is not positioning this chip for consumer mirrorless cameras right now. Its immediate impact will be felt in professional and embedded imaging systems. Early adoption is most likely in broadcast cameras, remote and robotic camera heads, drones, and compact camera modules, where mechanical stabilization is impractical. Historically, this is how Sony technology spreads. It proves itself in demanding professional environments before gradually influencing broader camera design. Furthermore, Sony already dominates image sensors. By pairing sensors with dedicated stabilization silicon, the company strengthens its influence over the entire imaging pipeline. Sensor design. Motion sensing. Stabilization logic. Reference camera modules. For filmmakers, this kind of vertical integration usually results in quieter improvements. The footage looks better before post-processing. Motion feels more natural without relying on aggressive correction later. These changes rarely arrive with fanfare, but they reshape expectations over time.

Sony’s sensor roadmap points to deeper hardware-level control

Sony’s decision to showcase a dedicated stabilization chip does not stand alone. It fits into a broader pattern of sensor-level innovation that Sony has been openly demonstrating over the past months. In Sony Demonstrates a 10K 105MP Global Shutter Sensor in Live Demo, Sony revealed a massive resolution global shutter sensor capable of capturing extreme detail without motion distortion. While officially aimed at industrial and specialized imaging, the implications for future cinema workflows are clear. High resolution no longer has to come at the expense of motion integrity. That direction continues with Sony IMX929 8K Global Shutter Sensor Reaches 200fps. This sensor demonstrates that high frame rates and high resolution can coexist through stacked sensor architecture and faster readout. For filmmakers, this removes long standing tradeoffs between resolution, speed, and motion artifacts. Sony has also expanded sensor scale with Sony Reveals IMX928 Large Format Global Shutter Sensor. With a large imaging area and true global shutter behavior, this sensor opens the door to more flexible framing, overscan, and distortion free motion in demanding shooting environments. Seen together, these developments reinforce the same message as Sony’s stabilization chip. The company is moving image quality decisions closer to the sensor, relying less on downstream correction and more on hardware level intelligence. For filmmakers, that trend matters far more than any single product announcement.

Final takeaway

Sony showcasing its new image stabilization chip is a subtle but meaningful signal. It points to a future where stabilization is handled closer to the source of the image, through dedicated hardware rather than software fixes. For filmmakers, especially those working with motion-intensive footage or compact rigs, this is the kind of behind-the-scenes development that quietly improves image quality years before it becomes obvious.