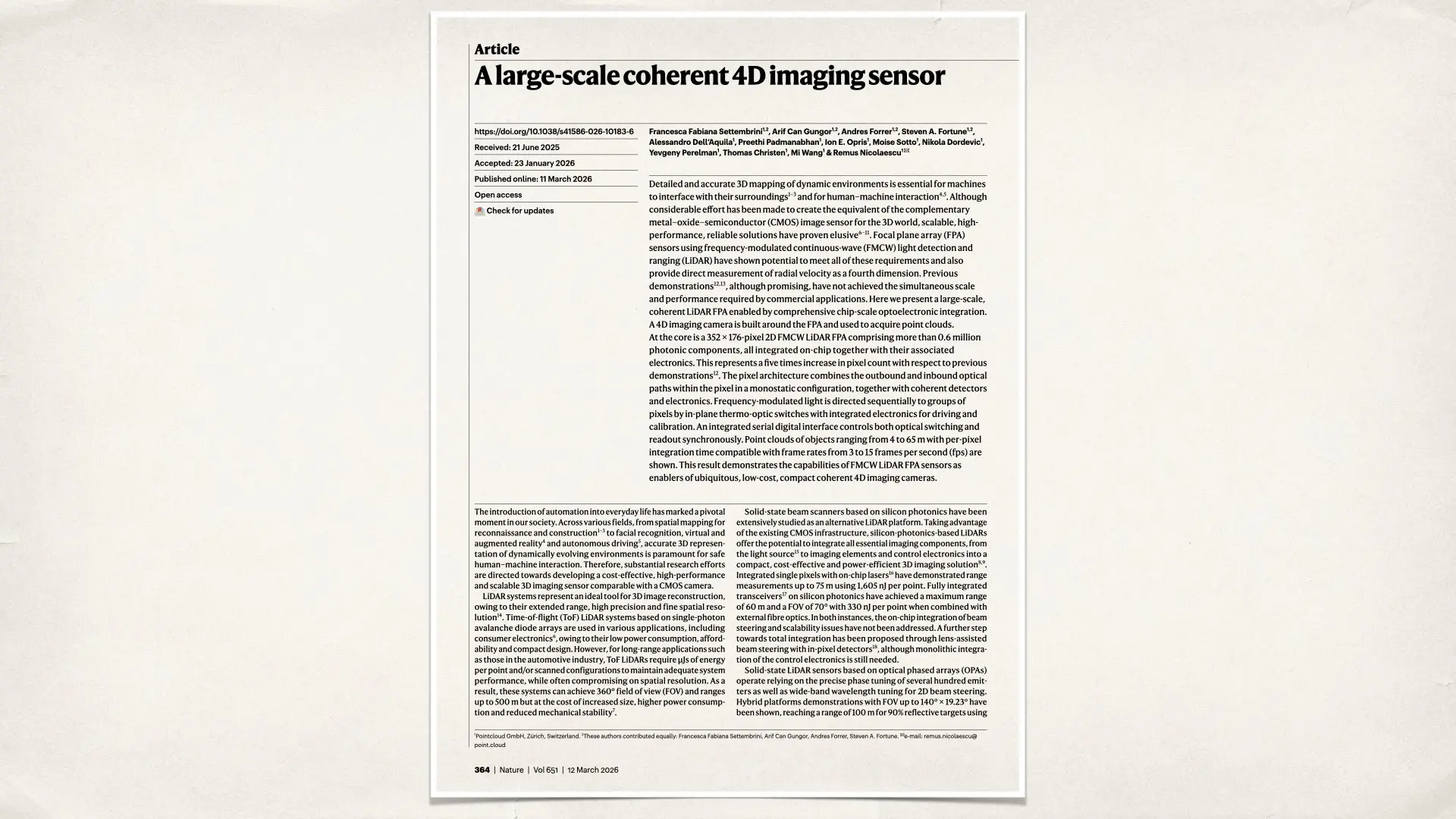

Scientists have unveiled a new type of imaging sensor that does something ordinary cameras cannot do. It can measure the shape of the world and the motion of objects at the same time. The device captures distance, movement, and spatial structure simultaneously, turning the camera into what researchers describe as a four-dimensional imaging system. The technology does not record photographs. Instead, it creates a detailed map of the environment while also measuring how objects move within that space. The concept could eventually change how machines perceive the world and could even influence future filmmaking tools.

What 4D imaging actually means

A normal camera records two-dimensional information. It captures width and height and converts light into pixels. Some modern devices add depth sensing using LiDAR technology, which introduces a third dimension by measuring how far objects are from the camera. The new sensor goes one step further. In addition to recording position in space, it also measures the velocity of objects. This means the sensor can determine whether something is moving toward the camera or away from it and how fast that motion occurs. That additional layer of motion information is why the researchers call the system four-dimensional imaging.

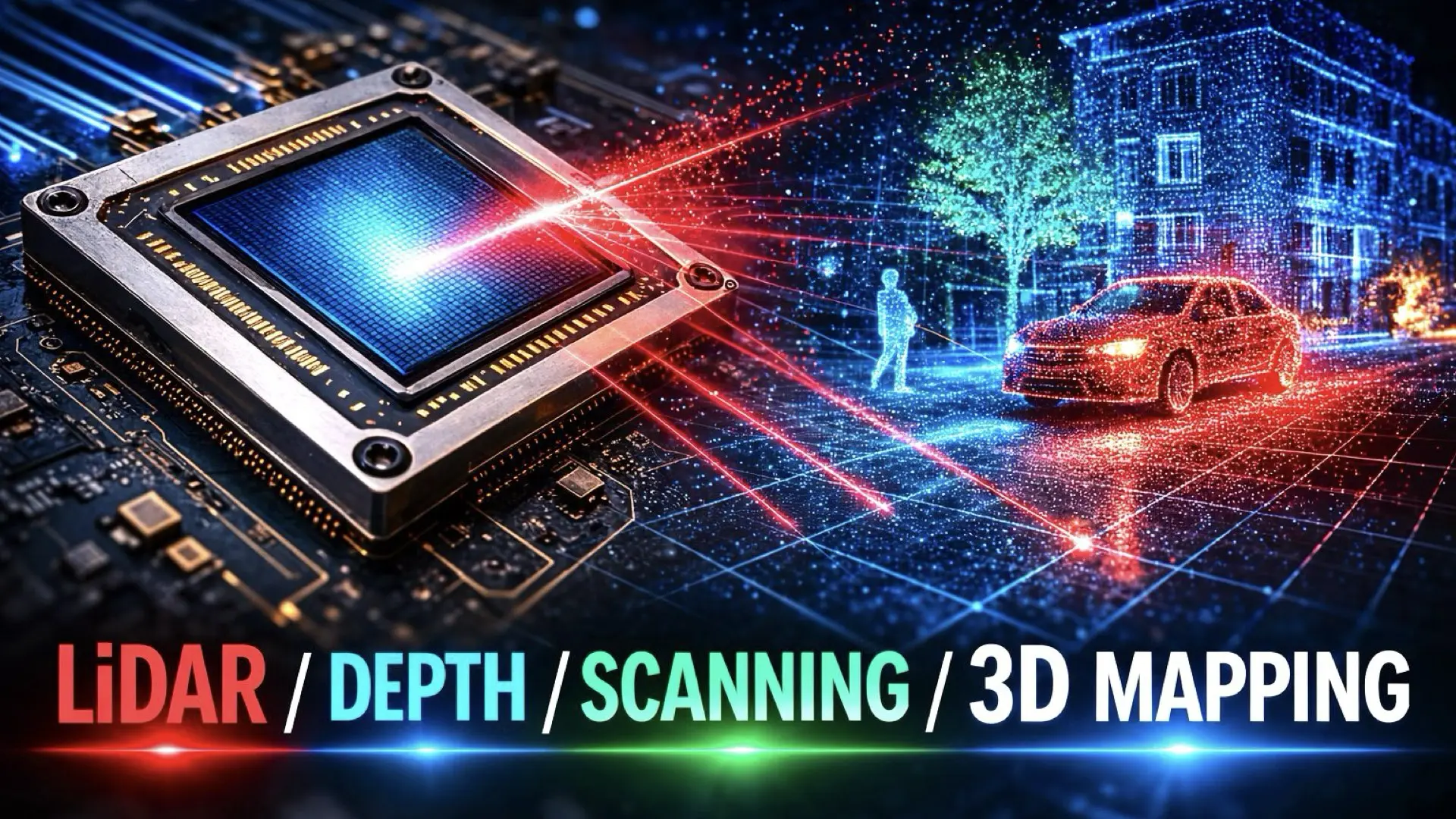

How the sensor works

Instead of capturing incoming light like a conventional image sensor, the system actively illuminates the environment with laser light. The laser signal changes frequency in a controlled pattern. When the light reflects off objects and returns to the sensor, the system analyzes the changes in the signal. These tiny frequency shifts reveal the distance of the object and its movement relative to the sensor. By combining this information across thousands of sensing points, the device reconstructs a three-dimensional map of the scene known as a point cloud. Each point represents a measurement of the environment, including its position and motion.

The resolution is surprisingly low

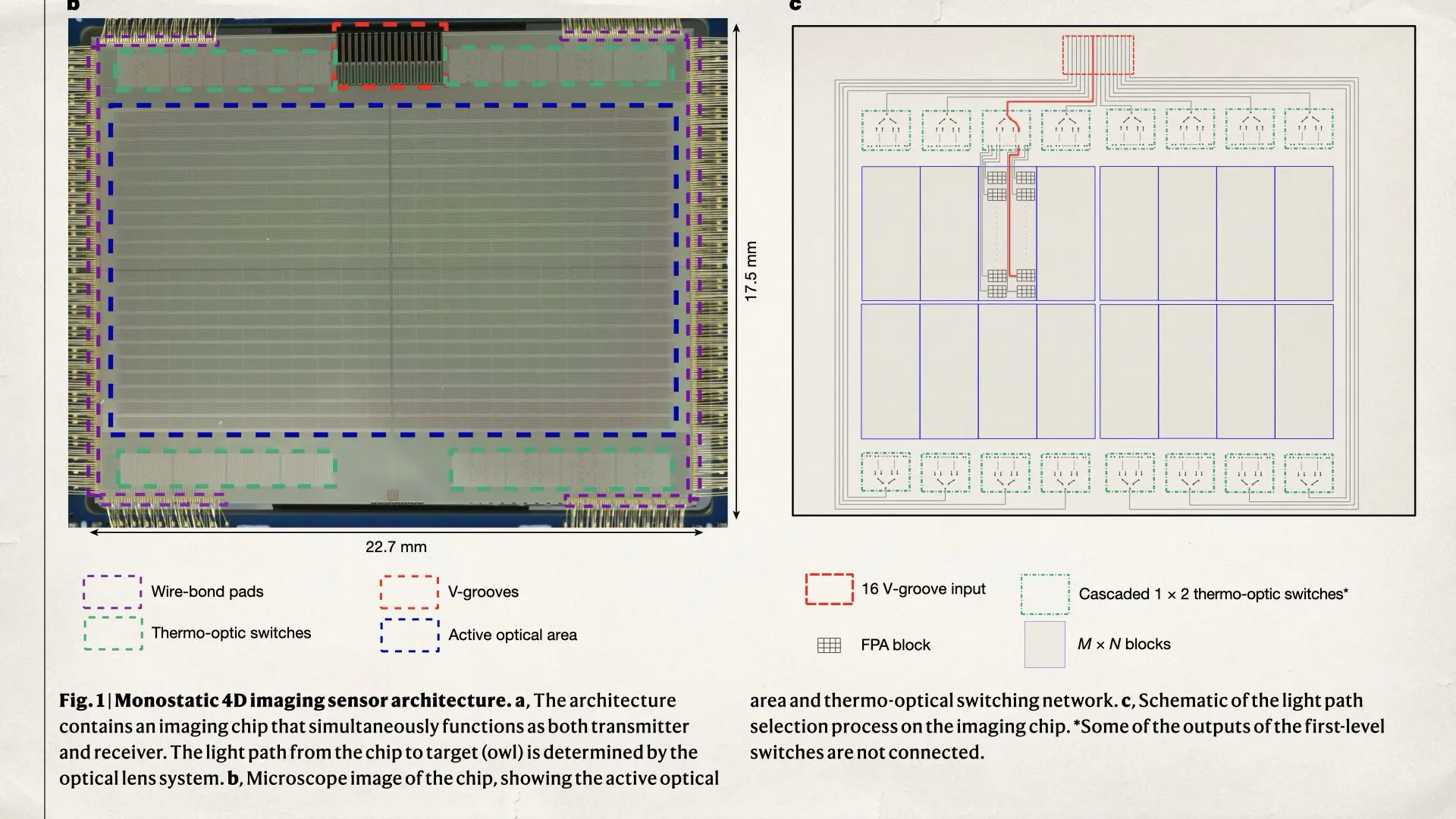

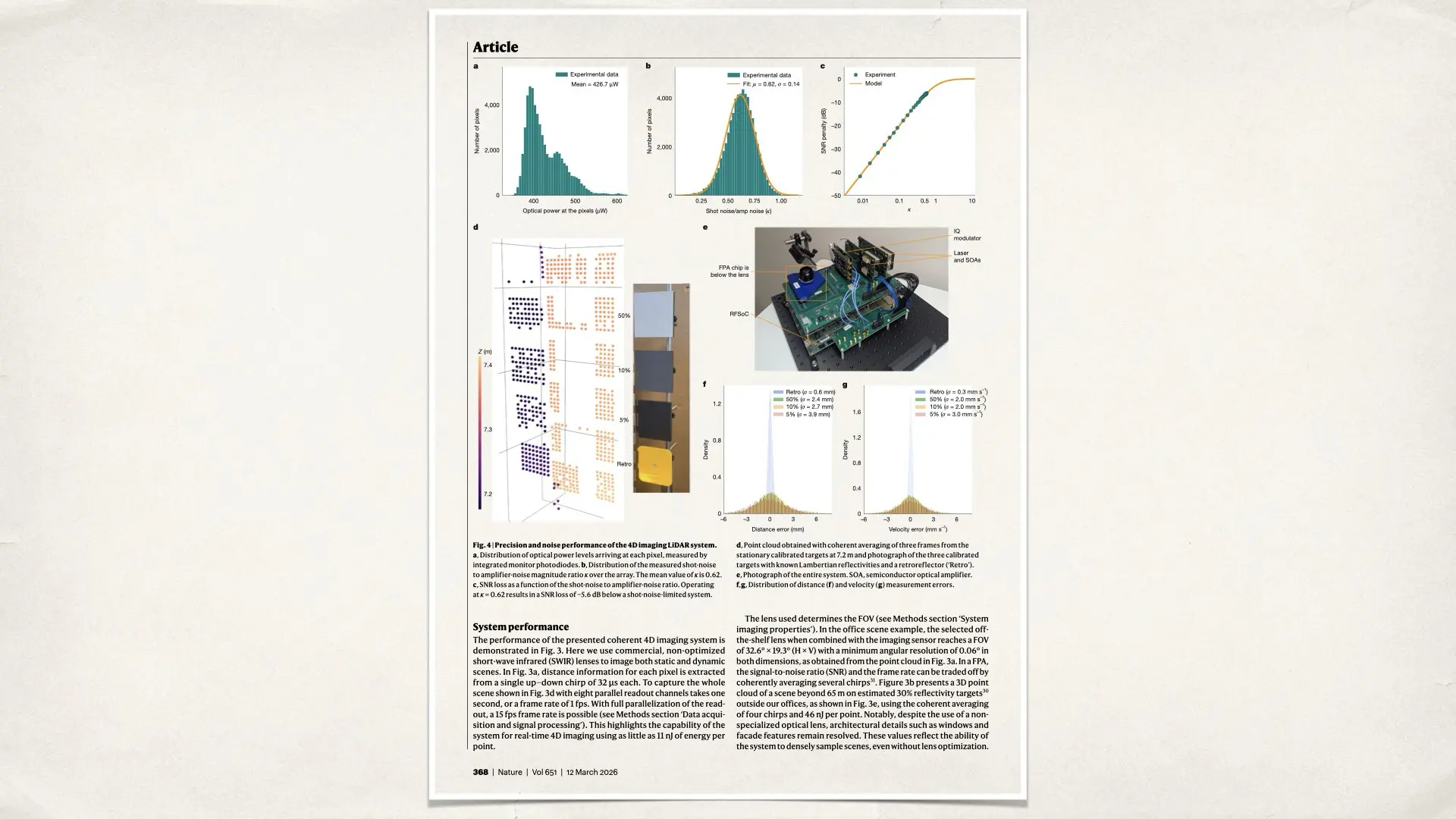

The sensor array contains 352 by 176 sensing elements, which equals about 62,000 pixels in total. In imaging terms, that is extremely low resolution, about 0.06 megapixels. Modern camera sensors typically capture tens of millions of pixels. However, each pixel in this sensor performs a much more complex task than a normal camera pixel. Instead of recording color or brightness, it measures distance and motion. The result is a spatial measurement system rather than a photographic imaging sensor.

A major step for LiDAR technology

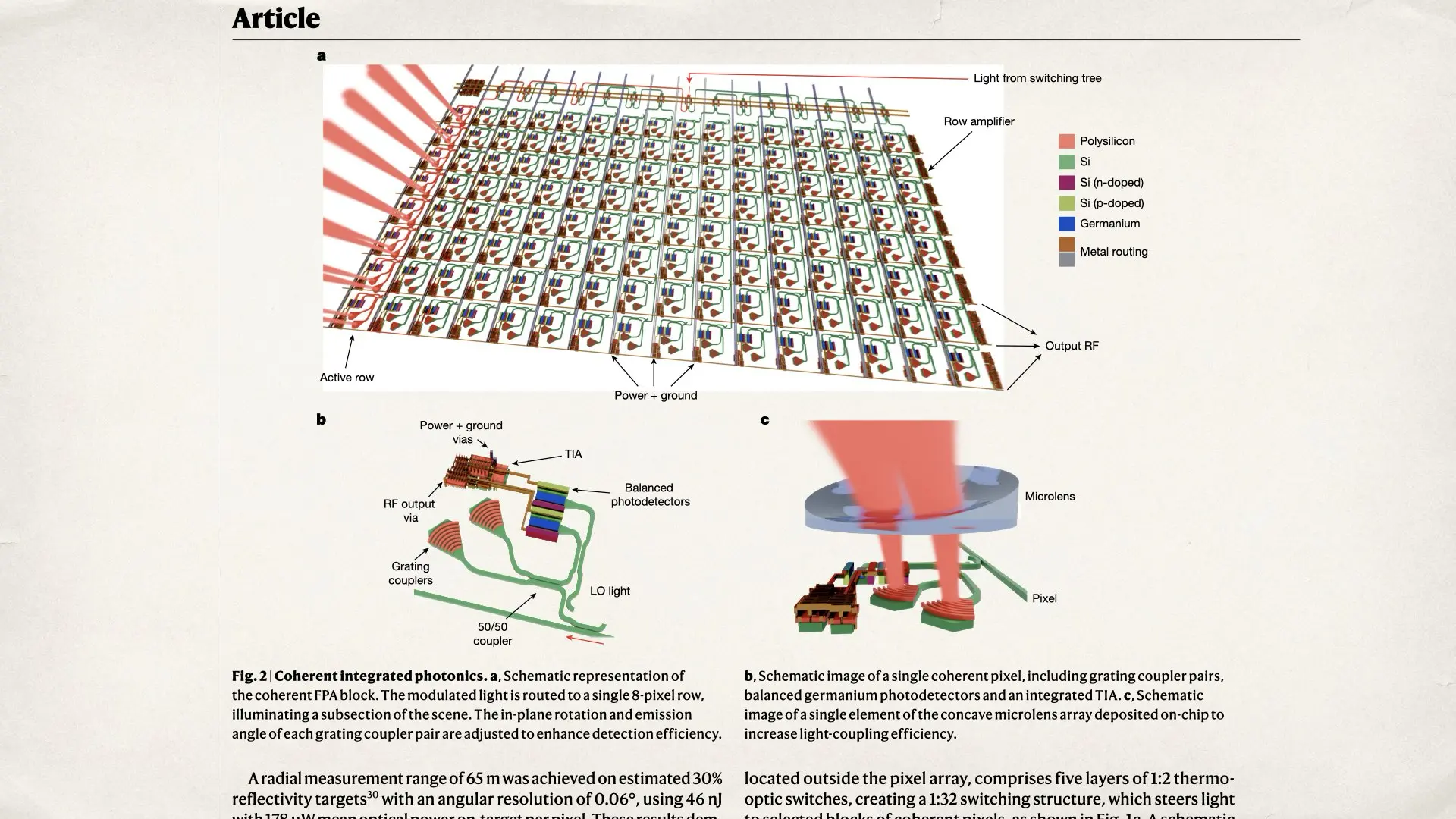

Although the resolution is low compared with cameras, the achievement is significant in the field of LiDAR. Previous coherent LiDAR systems contained far fewer sensing elements. This new design represents a major scale increase and integrates an enormous number of optical components onto a single chip. The device includes more than 0.6 million photonic elements along with electronic circuits that control the measurement process. Integrating that many optical components into a compact chip represents a major engineering milestone and demonstrates that LiDAR sensors can become more scalable and compact.

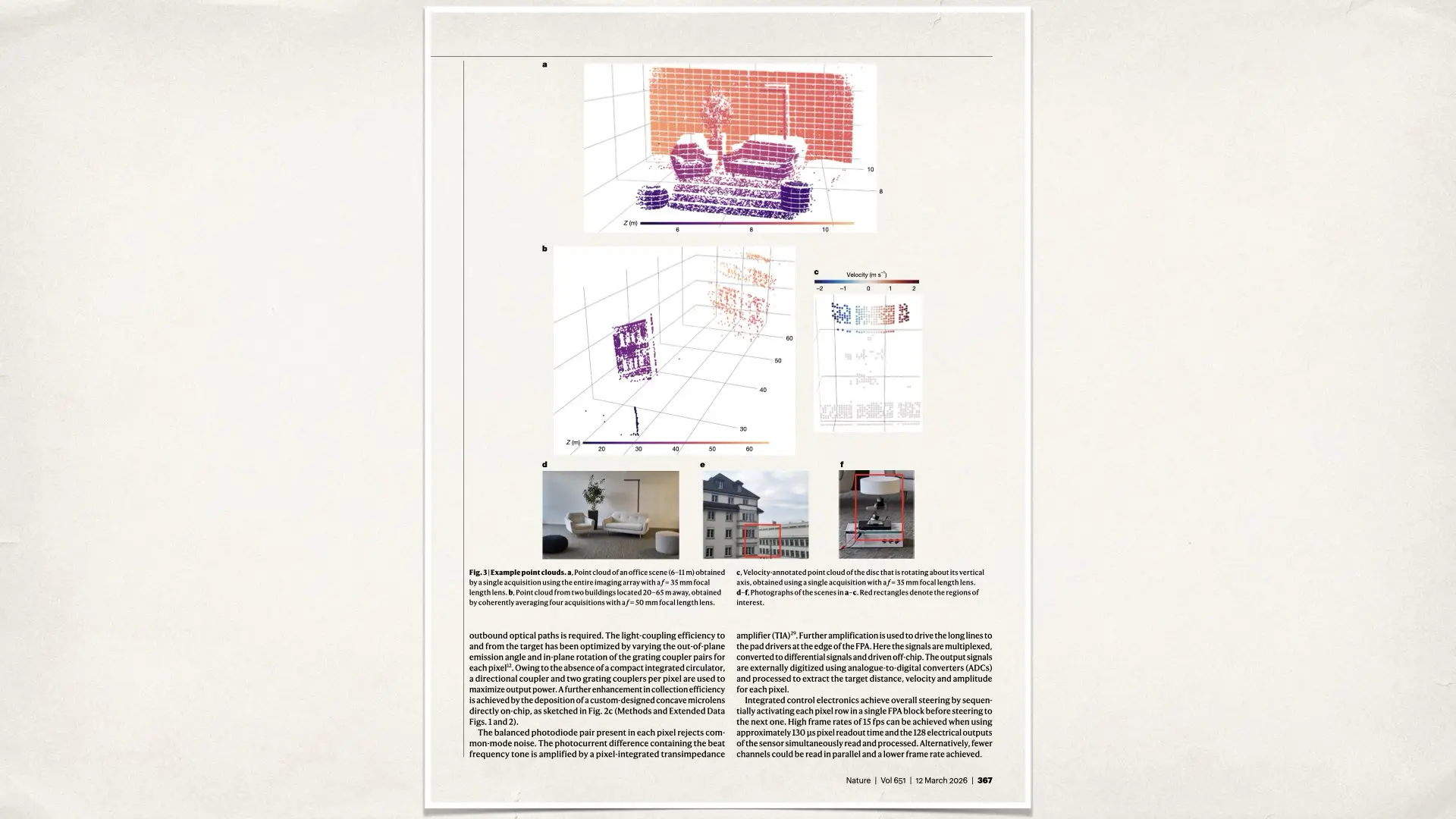

What the system can actually see

In demonstrations, the sensor was able to map scenes between about 4 meters and 65 meters away. The system generates detailed point clouds that reveal the structure of buildings, objects, and moving elements in the environment. The technology can even measure the velocity of moving objects with millimeter-level precision. This ability to capture both geometry and motion is what makes the sensor fundamentally different from traditional depth sensors.

Still far from replacing camera sensors

Although the sensor does not capture images, it could eventually become an important companion technology for cinema cameras. Modern visual effects workflows require accurate information about the geometry of the scene. Film productions often perform separate LiDAR scans of sets and environments so that digital elements can be integrated into the footage. A depth sensor integrated into a cinema camera could record that spatial information directly during filming. In the future, cameras might capture the image and the three-dimensional structure of the scene at the same time. That would make visual effects compositing, object tracking, and virtual production significantly easier. However, despite its potential, this technology is still in the research stage. The resolution is extremely low compared with photographic sensors, and the system produces point clouds rather than images. It cannot replace the main sensor in a cinema camera. Instead, its most realistic future role would be as a secondary sensor that records spatial data alongside the image.

James Cameron would be pleased

The researchers believe the architecture could scale to larger arrays in future generations. If LiDAR sensors eventually reach hundreds of thousands or even millions of sensing points, they could become the depth equivalent of CMOS camera sensors. At that point, cameras might capture far more than images. They could record the full geometry and motion of the world, transforming how machines, robots, and creative tools perceive reality. For sure, James Cameron will be pleased 🙂