Not. This is not clickbait. A research team has actually built an imaging system that can reach an effective speed of 685 billion frames per second. The cost is under $500. That sounds like a revolution in camera technology. The catch is that this is not a normal video camera. It captures a single very fast event, and only for a very short time. Here’s the research.

What the researchers actually did

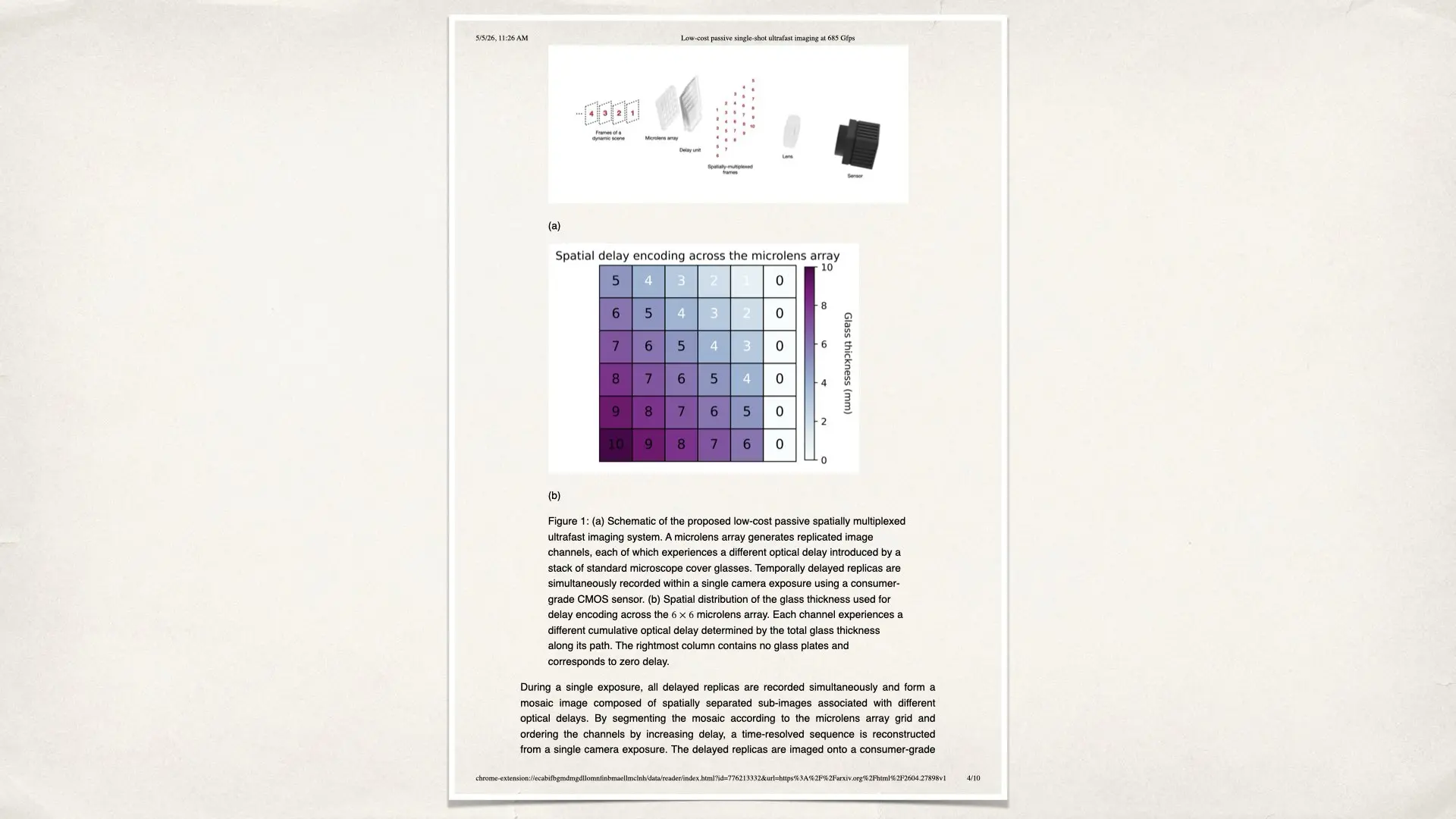

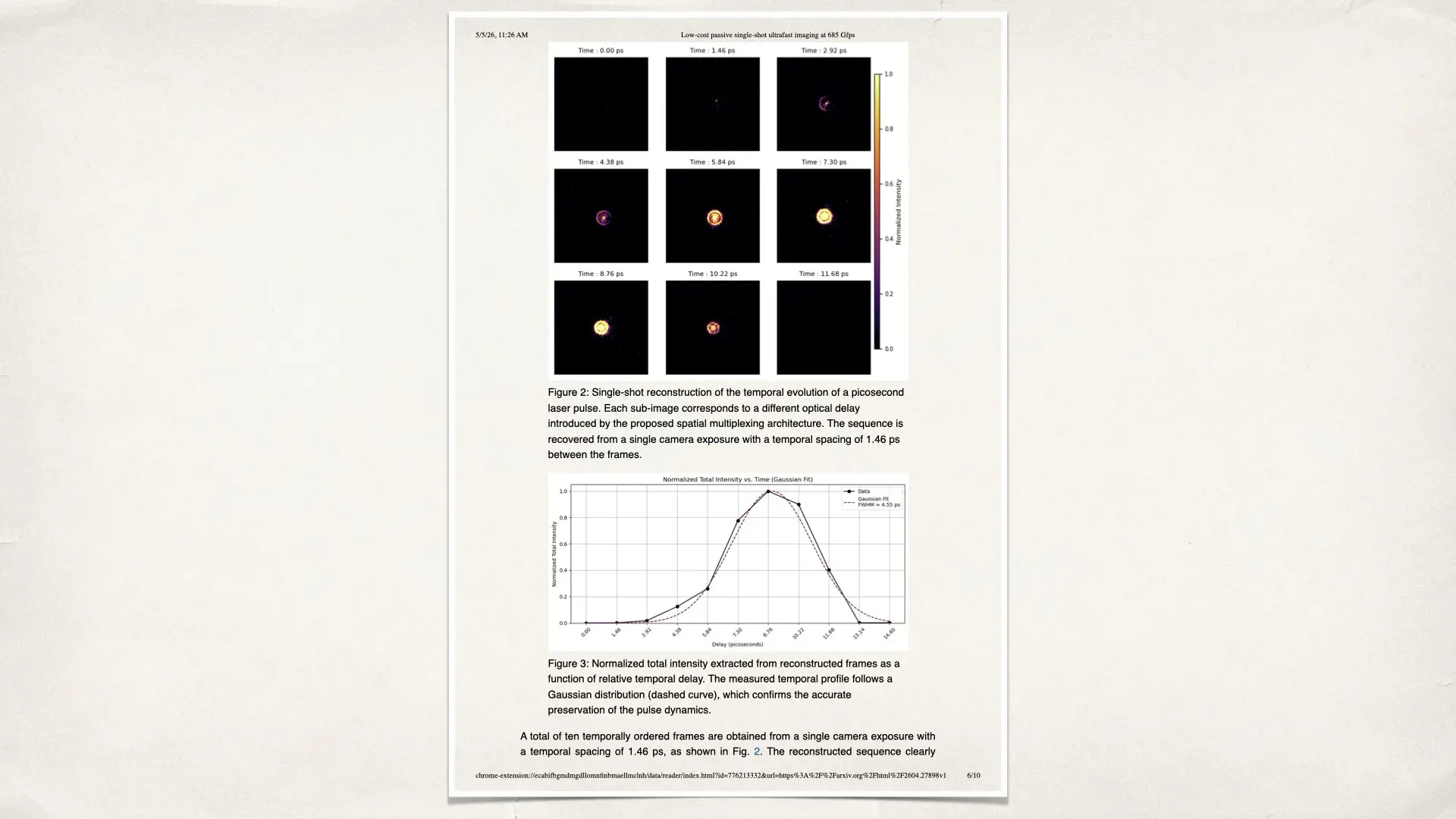

The researchers wanted to record something extremely fast, like how a laser pulse moves. Normal cameras cannot do this because their sensors are too slow. Instead of making a faster sensor, they used a different idea. They changed how light reaches the sensor. They used three simple parts: A microlens array, thin pieces of glass, and a standard CMOS sensor. The microlens array splits the image into many small copies. Each copy goes through a slightly different thickness of glass. Because light slows down inside glass, each copy arrives at a slightly different time. All these copies are recorded at once in a single photo. Think of it like this: You take one photo. But inside that photo, there are 10 small images. Each one shows the scene at a slightly different moment. So instead of recording a video, you capture time all at once and then separate it later. This is how they reach such an extreme “frame rate”.

Why is the number so high?

The time difference between each image is about 1.46 picoseconds. That is 0.00000000000146 seconds. Because the time steps are so small, when you convert that into frames per second, you get 685 billion FPS. This number is correct, but it needs context. It is not a continuous video. It is a very short burst of frames.

Why cost important

Most ultrafast imaging systems are extremely expensive. They can cost tens or hundreds of thousands of dollars. They often need special sensors and complex processing. Here, the researchers used: Standard glass, a commercial microlens array, and a regular sensor. This is why the cost is so low. This is the real breakthrough. Not just the speed, but how simple the system is.

The catch

There are several important limitations: First, it only captures about 10 frames. That is a very short sequence. Second, the total time it records is only about 13 picoseconds. That is useful for laser physics, but not for real-world motion. Third, each frame has a lower resolution. The sensor is divided into many small images, so each one has fewer pixels. This means you lose detail. So is this a real camera? Technically yes. But practically, no. You cannot use this system to shoot video, film, or even high-speed sports. It works in a controlled lab environment, mainly with light-based experiments.

Why this still matters

Even with these limitations, this work is important. It shows a different way of thinking about imaging. Instead of making sensors faster, you can change how light is captured before it reaches the sensor. This idea could influence future camera design. It suggests that performance improvements may come from optics, not just electronics. For cinema and real-world imaging, this does not change anything today. But it shows where things could go. Cameras are no longer just about sensors. They are about how information is encoded, captured, and processed. This experiment is a simple example of that shift. A $500 system reaching 685 billion FPS sounds unbelievable. The reality is more specific and more interesting. It is not a faster camera in the usual sense. It is a smarter way to capture time using basic optical principles. That is what makes it worth paying attention to. Go here for the research.

Not sure if I misunderstood how this works, but as I understood the article, light from an object, hits an array that splits it into multiple frames. The light then goes through glass of different thickness before hitting the sensor.

But as I read it, the light that hits the array and splits it, will just be copies of the same light. Even if you slow some of it down, it still originated from the same time frame – moment in time.

How can that show any variation of the subject?

Each optical path delays the light by a tiny amount, so every channel captures a slightly different moment in time rather than the same instant